Code review is one of those tasks every developer knows matters — and every developer dreads when the PR queue backs up. AI-powered code review tools promise to catch bugs, enforce standards, and speed up the feedback loop before a human reviewer even opens the diff.

But which tool actually delivers? I tested three of the most talked-about options — Codium (formerly CodiumAI), CodeRabbit, and Sourcery — across real-world pull requests in Python and TypeScript repositories. Here is what I found.

Why AI Code Review Matters Now

Manual code review is a bottleneck. A 2025 Google study found that developers spend an average of 6.4 hours per week reviewing code, and most of that time is spent on style issues, naming conventions, and obvious logic errors — exactly the kind of thing machines handle well.

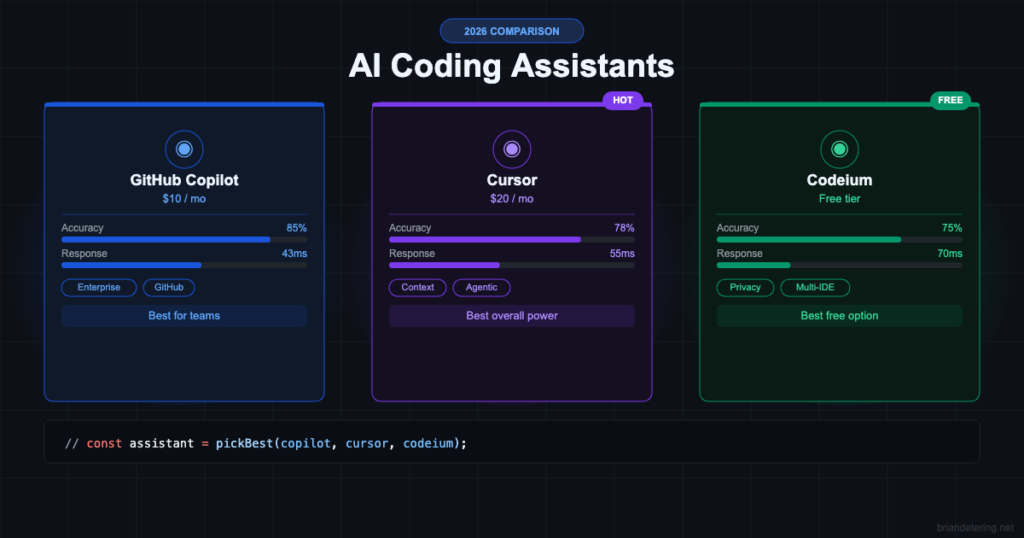

If you are already using an AI coding assistant like Copilot or Cursor to write code faster, it makes sense to use AI on the review side too. Otherwise, you are just generating more unreviewed code, faster.

Codium (CodiumAI)

Codium focuses on test generation and behavior analysis rather than line-by-line review. When you open a PR, it analyzes the changed functions and suggests test cases you might have missed — edge cases, boundary conditions, error paths.

The standout feature is its “behavioral coverage” analysis. Instead of just looking at line coverage, it maps the logical behaviors of each function and identifies which behaviors are untested. For a function that parses user input, it might flag that you tested valid input and empty input but never tested input that exceeds the maximum length.

In my testing, Codium caught a null reference issue in a TypeScript utility that had passed all existing tests. The suggested test case was well-structured and immediately runnable. It also generated tests for edge cases I hadn’t considered in a date parsing module.

The weakness is noise. On large PRs with many changed files, Codium generates a lot of suggestions, and filtering signal from noise takes time. It works best on focused PRs that touch 2-5 files.

Best for

Teams that want better test coverage as part of the review process. If your CI pipeline already includes automated testing via GitHub Actions or GitLab CI, Codium slots in naturally.

CodeRabbit

CodeRabbit is the most comprehensive option. It posts a detailed review comment on every PR with a summary of changes, potential issues, security concerns, and style suggestions. It reads like a review from a senior engineer who has actually understood the diff.

The summary alone is worth it. For large PRs, CodeRabbit generates a plain-English description of what changed and why, which saves reviewers from having to piece it together themselves. It also flags potential issues inline — things like unhandled error paths, performance regressions, and API contract violations.

Where CodeRabbit really stands out is its contextual awareness. It understands your codebase, not just the diff. If you rename a function in one file but forget to update a reference in another, it catches it. If your PR introduces a pattern that conflicts with an existing utility, it points that out.

I ran it on a Node.js monorepo with 12 services and it correctly identified a breaking change in a shared utility that would have affected three downstream consumers. That alone would have saved hours of debugging in production.

The downside is cost. CodeRabbit charges per repository and it is not cheap for large organizations with hundreds of repos. But for small to mid-size teams, the pricing is reasonable for the value.

Best for

Teams that want a full-service AI reviewer that catches architectural and security issues, not just style problems.

Sourcery

Sourcery takes a different approach — it focuses on code quality metrics and refactoring suggestions. Rather than reviewing for correctness, it reviews for maintainability. It flags complex functions, duplicated logic, and code that could be simplified.

The quality dashboard is useful for tracking trends over time. You can see whether your codebase is getting more or less complex with each sprint, which is valuable data for technical debt discussions.

For Python projects specifically, Sourcery is excellent. It understands Python idioms deeply and suggests Pythonic rewrites that are genuinely cleaner — things like replacing manual loops with comprehensions, using context managers, and simplifying conditional logic.

The limitation is language support. Sourcery is strongest in Python, decent in JavaScript/TypeScript, and limited elsewhere. If your stack includes Go, Rust, or Java, you will not get as much value.

Best for

Python-heavy teams focused on code quality and technical debt reduction. Pairs well with AI workflow automation tools for a fully automated quality pipeline.

Verdict

CodeRabbit is the best all-around AI code review tool. It catches real bugs, understands context beyond the diff, and the PR summaries alone save significant review time. If you are picking one tool, start here.

Codium is the best choice if your primary goal is test coverage. It does not replace human review, but it makes sure your tests actually cover the behaviors that matter.

Sourcery is the best for Python teams that care about code quality metrics and want automated refactoring suggestions. It is more of a code health tool than a review tool.

For most teams, I would recommend starting with CodeRabbit on your most active repositories and evaluating whether the catch rate justifies expanding to the rest of your org. If you are already investing in application security, AI code review is a natural extension of that effort.